11 min read time

Topics

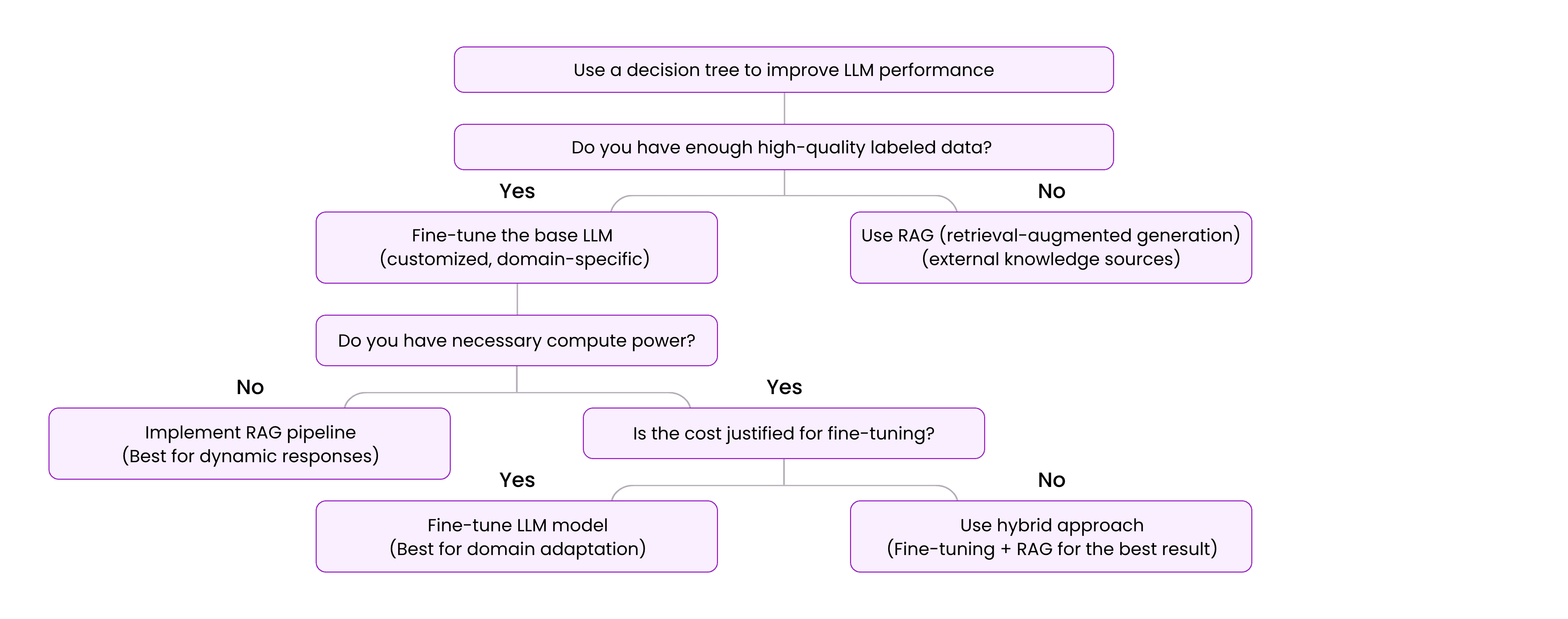

Organizations integrating AI into their operations face a critical decision: whether to fine-tune a large language model (LLM) for task-specific proficiency or use retrieval-augmented generation (RAG) to dynamically pull in external knowledge.

This choice depends on whether the AI needs to generate highly specialized, deterministic responses—such as in financial reporting or legal document drafting—or provide context-aware, real-time insights, as seen in customer service chatbots and analytics. Each approach carries distinct implications for cost, scalability, adaptability, and output quality, all of which influence how AI enhances business processes.

Fine-tuning retrains an LLM on proprietary data, embedding deep expertise directly into the model—essential for precision, consistency, and specialized terminology. By contrast, RAG retrieves information from external sources in real-time, making it ideal for applications requiring fresh, dynamically updated knowledge.

Understanding when to apply each method is crucial to developing AI solutions that are not only technically sound but also strategically aligned with business priorities.

Fine-Tuning can deliver precision through specialization

Fine-tuning an LLM involves retraining it on a curated dataset to embed domain-specific knowledge, refining its outputs for greater precision, consistency, and relevance. This approach is particularly valuable in scenarios requiring accuracy and predictability, allowing AI-generated content to align with established guidelines, regulations, or proprietary expertise.

However, fine-tuning is most effective when models must operate within a fixed knowledge base, prioritize deterministic outputs, and maintain specialized performance over time, making it ideal for use cases where stability and compliance are paramount.

Fine-tuning is suitable for domain-specific expertise

Some industries, such as medicine, law, and engineering, require AI models with deep, specialized knowledge, as incorrect information can have serious consequences. Fine-tuning allows organizations to train models on proprietary datasets, embedding expertise that makes AI function like an industry insider rather than a generalist.

Application: A pharmaceutical company developing an AI-driven drug research system might fine-tune an LLM with clinical trial data, medical journals, and FDA reports. This enables the model to generate accurate drug interaction analyses while aligning with regulatory standards and internal research protocols, avoiding misinterpretations of complex biomedical relationships.

Fine-tuning is appropriate when terminology and context sensitivity are essential

Industries with specialized vocabularies require AI models that not only recognize words but also understand their meaning within a specific domain.

General-purpose LLMs can misinterpret technical terms, leading to errors in legal contracts, financial reports, or scientific analyses. Fine-tuning helps models internalize domain-specific language, improving clarity and reducing misinterpretation.

Application: A legal firm using AI for contract drafting and case law summaries benefits from a model trained on legal documents, statutes, and precedents. This allows the AI to recognize complex legal terms, apply them in context, and generate precise contracts and summaries that align with regulatory nuances.

Deterministic outputs for repetitive tasks require fine-tuning

In some business functions, AI-generated outputs must be predictable, standardized, and repeatable. While slight variations may be acceptable in conversational AI, tasks like financial reporting, regulatory compliance, and manufacturing quality control demand absolute consistency.

Fine-tuning eliminates variability, producing identical outputs for the same input parameters, which is critical for operational reliability.

Application: A financial services company might fine-tune an AI model to generate quarterly earnings reports, adhering to strict formatting guidelines, specific financial calculations, and regulatory disclosure requirements, automating report generation with near-zero variation.

Enhancing model performance in specialized use cases requires fine-tuning

Even powerful base LLMs can struggle with regional dialects, niche technical fields, or interactions requiring deep contextual awareness. Fine-tuning helps bridge these gaps, refining a model to perform better in areas where it initially falls short. For example, a speech-to-text application may excel with standard English but struggle with specialized accents or industry jargon.

Application: A telecommunications company could fine-tune the model with audio datasets featuring regional accents and multilingual inputs, improving transcription accuracy for call centers, accessibility services, and customer support platforms serving diverse users.

Fine-tuning presents challenges

Fine-tuning offers significant advantages in precision and specialization, but it comes with challenges organizations must consider before committing to this approach:

High computational and data costs. Fine-tuning requires retraining LLMs with large, domain-specific datasets, demanding substantial computing power, high-performance GPUs or TPUs, and skilled AI professionals. For organizations without dedicated AI infrastructure, cloud-based training and model optimization costs can be prohibitive.

Risk of overfitting. A fine-tuned model can become so specialized that it struggles to generalize beyond its training data. While it may excel in a narrow domain, unexpected queries or broader applications can pose challenges, requiring careful training data selection and validation testing to maintain flexibility.

Ongoing maintenance and retraining costs. A fine-tuned model trained on static data can quickly become outdated, necessitating periodic retraining to reflect regulatory updates, industry standards, or emerging trends. This increases long-term costs and operational complexity, making retrieval-based approaches like RAG a more practical solution for businesses prioritizing scalability and adaptability.

Fine-tuning remains a valuable strategy for organizations requiring precision, consistency, and deterministic outputs, but its resource demands and maintenance needs must be carefully weighed against alternatives.

RAG offers contextualization through flexibility

Unlike fine-tuning, which embeds domain knowledge directly into a model, RAG dynamically retrieves relevant information from external sources at the time of inference, keeping AI-generated outputs updated without costly retraining. This approach is ideal for environments where information changes frequently, responses must remain contextually relevant, and adaptability is a priority.

By incorporating the most current data available, RAG helps AI-driven decisions stay aligned with real-world developments. However, this flexibility comes with trade-offs, including dependence on external data quality and potential delays due to real-time retrieval. Here are some RAG use cases:

Use RAG when you need to adapt to dynamic contexts and real-time updates

Many industries need AI models to generate responses based on rapidly evolving information, where static knowledge in a fine-tuned model may quickly become outdated. RAG solves this by dynamically retrieving up-to-the-minute data, keeping AI-generated responses timely and relevant.

Application: A news aggregator powered by AI can use RAG to pull in breaking news from live feeds and trusted sources, generating summaries that reflect the latest developments. This allows users to receive accurate, current information even in fast-changing news cycles.

RAG delivers cost-efficiencies and scalability

Fine-tuning an LLM demands significant computational resources, skilled personnel, and ongoing maintenance, while RAG provides a cost-effective alternative by retrieving information from external sources instead of requiring frequent retraining. This makes RAG appealing for businesses scaling AI solutions without high infrastructure costs.

Application: Consider an ecommerce chatbot. Fine-tuning would need constant retraining to reflect inventory updates and pricing changes. With RAG, the chatbot can pull live inventory data directly from the retailer’s database, keeping recommendations accurate without expensive model adjustments.

RAG can mitigate model drift

Fine-tuned models can experience model drift, where their outputs become less relevant as the data landscape evolves. RAG mitigates this by retrieving the latest available information instead of relying on a fixed training set.

Application: A financial advisory platform using RAG can pull real-time market trends, economic reports, and regulatory updates to provide investment insights that reflect current conditions. This keeps data accurate and relevant without requiring frequent model retraining.

RAG supports multi-context applications

Some AI applications must operate across multiple domains, industries, or languages, requiring flexible knowledge retrieval. RAG allows a single model to adapt dynamically to different contexts, keeping responses relevant regardless of the user’s location, language, or specific needs.

Application: A multilingual customer support system can use RAG to retrieve region-specific answers in different languages, providing responses tailored to local regulations, cultural preferences, and product availability. This flexibility makes RAG an effective solution for businesses serving diverse customer bases.

RAG presents challenges

RAG provides a scalable and adaptable alternative to fine-tuning. But it introduces its own set of challenges:

Infrastructure demands. Implementing RAG requires a robust retrieval pipeline and well-curated knowledge bases to maintain the accuracy and reliability of retrieved data. Maintaining these systems can be complex and resource-intensive.

Dependence on external data. The quality of RAG-generated responses depends entirely on the accuracy and credibility of the sources it retrieves from. If the external data is outdated, biased, or incomplete, the model’s output may suffer.

Latency concerns. Because RAG performs real-time data retrieval, response times may be slower compared to fine-tuned models, particularly in scenarios that require complex multi-step retrieval processes.

But RAG remains a powerful tool for organizations that prioritize adaptability, cost-efficiency, and access to real-time knowledge. Businesses must weigh these benefits against the potential trade-offs when deciding whether RAG is the right approach for your AI applications.

Compare RAG to fine-tuning

Criteria | Fine-tuning | RAG principles |

|---|---|---|

Cost | High (due to retraining | Relatively low (avoids retraining, |

Adaptability | Limited to specific | Highly flexible across dynamic |

Response time | Fast for pre-trained | Potentially slower due to |

Knowledge updates | Requires retraining | Accesses updated knowledge |

Best use cases | Deterministic and niche | Dynamic, multi-context, and |

Choose carefully before deciding whether to fine-tune or use RAG

Choosing between fine-tuning a base LLM and use RAG principles is not a straightforward decision. Each approach comes with trade-offs, and the right choice depends on your specific needs, resources, and operational goals.

Before committing to one method, you should carefully evaluate several critical factors that influence AI performance, cost-effectiveness, and long-term viability.

Weigh task characteristics: static precision vs. dynamic adaptability

The task an AI model performs determines whether fine-tuning or RAG is the better approach. Some applications require static, deterministic outputs for consistency and predictability, while others need real-time adaptability to reflect the latest information.

Financial reporting systems and legal contract automation benefit from fine-tuning, as they require structured, repeatable outputs aligned with established rules and terminology. In contrast, AI-driven customer support systems and market analysis tools may function better with RAG, pulling in current, contextually relevant information rather than relying on static knowledge.

What are your budget constraints?

Fine-tuning a model demands significant upfront investment in computing resources, specialized personnel, and ongoing maintenance, making it impractical for organizations with limited budgets or frequent update requirements.

In contrast, RAG offers a more cost-efficient alternative by retrieving real-time knowledge from external sources, allowing businesses to scale AI capabilities without extensive retraining. This makes RAG especially appealing for companies without dedicated AI infrastructure or those needing a solution that remains effective without periodic adjustments.

How frequently do you need to perform updates?

Industries like healthcare, finance, and technology require AI models that keep pace with evolving information, making fine-tuning challenging as static datasets quickly become outdated and require costly retraining.

RAG addresses this by retrieving the most up-to-date information at the moment of inference, making it a practical choice for applications reliant on timely knowledge, such as news aggregation, regulatory compliance monitoring, or real-time investment analysis. For fields where accuracy depends on staying current, RAG offers greater long-term adaptability than a fine-tuned model.

What are your scalability needs?

Some AI applications require deep expertise in a single domain, while others must adapt across industries, regions, or user groups. Fine-tuning is ideal for tasks demanding in-depth understanding, such as scientific research, legal documentation, or regulated business processes.

However, for AI systems serving multiple audiences, languages, or topics, RAG offers a more scalable solution by dynamically retrieving context-specific knowledge. This allows a single model to function effectively across diverse use cases without needing separate fine-tuned versions, making RAG a preferred choice for global customer service, multilingual AI applications, and industries requiring contextual flexibility.

Make the right choice for your business

No single approach is universally superior. Businesses must weigh your task requirements, budget, need for frequent updates, and scalability goals to determine the most effective strategy.

Fine-tuning delivers precision and consistency but requires ongoing investment and is best suited for specialized, unchanging tasks. RAG, on the other hand, offers flexibility, cost savings, and real-time knowledge retrieval, making it an excellent choice for applications that demand adaptability and continuous learning.

The bottom line is, by carefully assessing these factors, you can make an informed decision about which method aligns best with your operational needs.

A frontier AI data foundry platform can help you

A frontier AI data foundry platform is a centralized system designed to manage, process, and analyze data from diverse sources. The platform manages the entire AI lifecycle, from data collection to deployment.

A frontier AI data foundry platform supports both fine-tuning LLMs and implementing RAG by integrating data, models, and workflows into a cohesive framework. It streamlines fine-tuning, enabling greater precision and efficiency for specialized tasks while also facilitating dynamic retrieval of up-to-date information to enhance contextual relevance in real-time applications.

In the context of RAG, the platform provides the infrastructure to manage and access external knowledge bases, allowing AI models to incorporate current data without constant retraining. By serving as a centralized hub for data management, it maximizes the value of both fine-tuning and RAG methodologies.

Learn more about Centific’s frontier AI data foundry platform.

Are your ready to get

modular

AI solutions delivered?

Connect data, models, and people — in one enterprise-ready platform.

Latest Insights

Connect with Centific

Updates from the frontier of AI data.

Receive updates on platform improvements, new workflows, evaluation capabilities, data quality enhancements, and best practices for enterprise AI teams.